Testing Endurance for Team Sport Athletes

Introduction

There are numerous endurance tests that a strength and conditioning coach (S&C from now on) might use. So many that it is confusing which one to choose and why. For that reason, I decided to write this article: I want to outline my reasoning and rationale when selecting an endurance test.

First of all, we are going to assume that tests are reliable. I don’t want to go into assessing the reliability and sensitivity of the tests (although that is of great importance and something you better be doing as S&C coach and/or sport scientist ), but rather discuss other points of interest.

Second, I am writing this for a S&C coach working in a running-based team sport (i.e. rugby, soccer, Aussie rules, handball, etc). So those are things to keep in mind when reading my rant.

Why are we testing

Why are we testing in the first place? Do we actually need to test? NO! Sometimes we don’t need to test (for example when working with developmental athletes, recreational athletes and so forth). Other times, testing is very much needed.

Here are my top reasons for testing:

- To identify things I need to be working on with a particular athlete (i.e. profiling, rate limiter analysis)

- To help with training prescription

- To have a baseline measure that could be useful in return-to-play protocols

- To cover my ass

And that is pretty much the content of this article. I will cover each rationale separately and then I will give my suggestions for potential tests that could be used. Besides that I will talk about Occam’s Razor, when to test, embedded testing with GPS, “not giving a fuck” issues with players/clubs, fatigue and injury risk, pacing and so forth.

These could be applied to other testing as well (i.e. strength, speed, etc), but I wanted to make this article more specific, rather than too general, so I will stick to endurance solely.

Also bear in mind that these are not “scientific”, “evidence-based practices” and other click-bait rationales – just my own viewpoints.

So grab a pint of beer and let’s go.

Things to work on (Profiling)

The first reason for testing, in my humble opinion, is to help with creating objectives of training (i.e. goals) by performing strength-weakness analysis. This is easier said than done of course. For example, what are our frames of reference? Other players in the team? Other players in the league? Other players on that position? What about the “spread” of those reference points? And then we have more practical questions: what are we doing to address those weaknesses and improve strengths. And will those yield improvements in performance or robustness of the player(s)?

To profile a player one needs a battery of tests that assess identified important physical characteristics. Having these available one can identify certain things that might be worth working on with a particular athlete. As I have alluded in the previous paragraph, this is very nice in theory, but in practice, it is much harder to do, but it definitely gives us something to work with. Using these battery of tests we can also identify clusters of athletes (if there are any) and group them a bit better (this can work well with injury/movement screening). Some coaches bases their whole periodization system not on following pre-defined blocks (e.g. strength, hypertrophy, power, etc), but by making program suit the current need of the player (see more in Agile series, especially this video and look for Al Vermeil system)

One thing that pisses me off is of course the way sports scientists visualize those profiles. Please, please stay away from radar/spider chars and pie charts.

Anyway, the general idea of profiling is to give us a big picture of the state of our athletes and can help us with what needs to be done with particular individuals. Making practical sense out of this data involves much more than it can be written here and needs to be evaluated while juggling with a lot of other factors and constraints.

Things that hold you back (Rate Limiters)

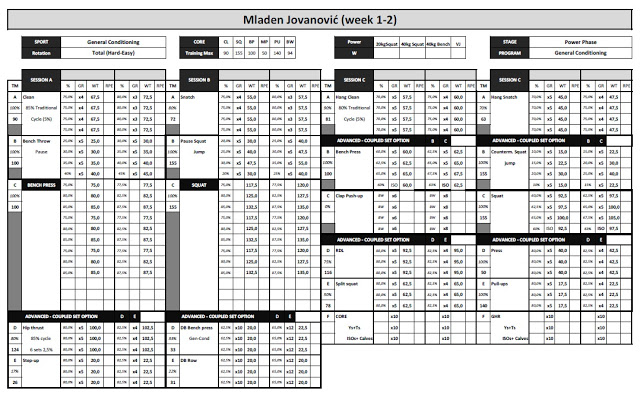

Having a big picture in place, we are now interested in identifying the rate limiter. By this, I refer to the factor that might limit performance (in this case endurance performance). For example, a given athlete reached 2000m in the YoYo Recovery Level 1 test. We have identified that as a weakness and we are now interested in identifying the limiting factor (why this is so).

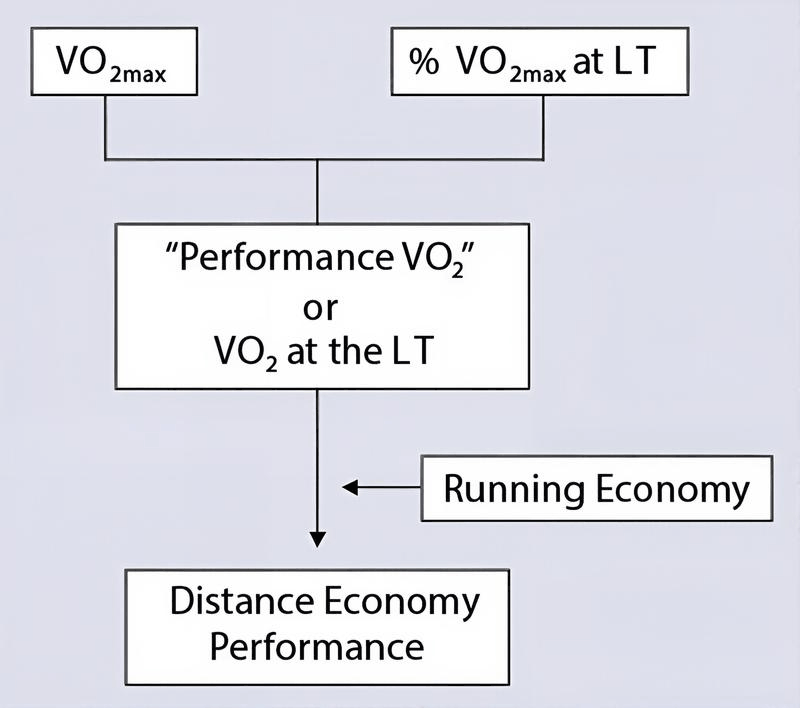

The first thing to check is motivation – did the athlete gave his best? Once we are sure that the level of motivation is satisfying we can proceed with other factors. There are multiple models that could be used to explain the limiter, one of which is the following:

So the limit for this particular athlete can be explained using different models. For example, the athlete might have the good aerobic capacity, but very poor movement efficiency (for example very inefficient change of direction that costs him time and energy), his intra-rep recovery (recovery in those 15sec between runs in YoYo) could be subpar and cost him the score.

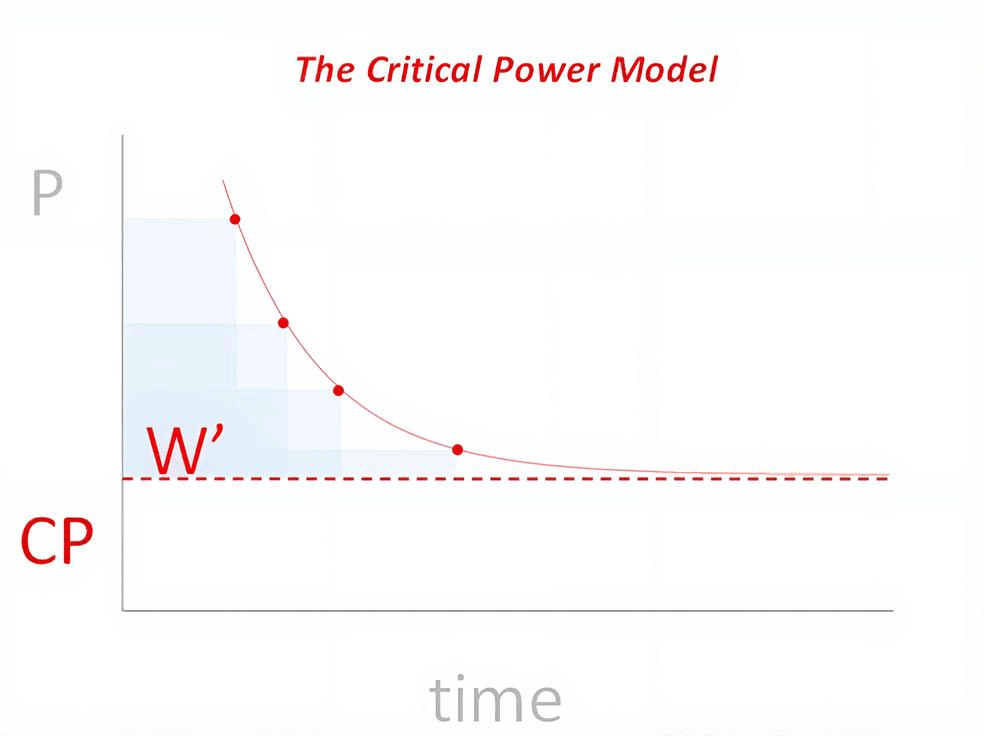

We can also rely on another famous model, Critical Power (or Critical Velocity) model:

In this model we have two components: critical velocity (CV) (the velocity or power that can be maintained for a longer period of time, and it is usually at the midpoint of vVO2max/MAS and Gas Exchange Threshold, or vGET, vLT, and so forth) and W’ or D’, which can be called “anaerobic reserve” or “nitro”. These two components (please bear in mind that there are different models that have more components) can explain pretty well the endurance performance. For example, we might have two runners/athletes that have the same performance, but might have different CV and D’ – so one might be more “aerobic” and have less “kick” and other vice versa.

The point I am trying to make here is that we might have two athletes that have the same performance in a test but be limited by a different factor (i.e. rate limiters). Another point to be made is that there is NO SINGLE model of performance and we must rely on multi-model thinking and understand that we are dealing with models and use multiple of them to help our reasoning and decision making.

Then another problem emerges – how complex do we need to go (or as I love to say how deep down the rabbit hole we need to go). Generally the higher the level of the athlete, the deeper we need to go to look for potential limiters and gain smaller and smaller gains in performance. This reminds me of a fractal and I have addressed this in Grand Unified Theory of Sports Performance article and video.

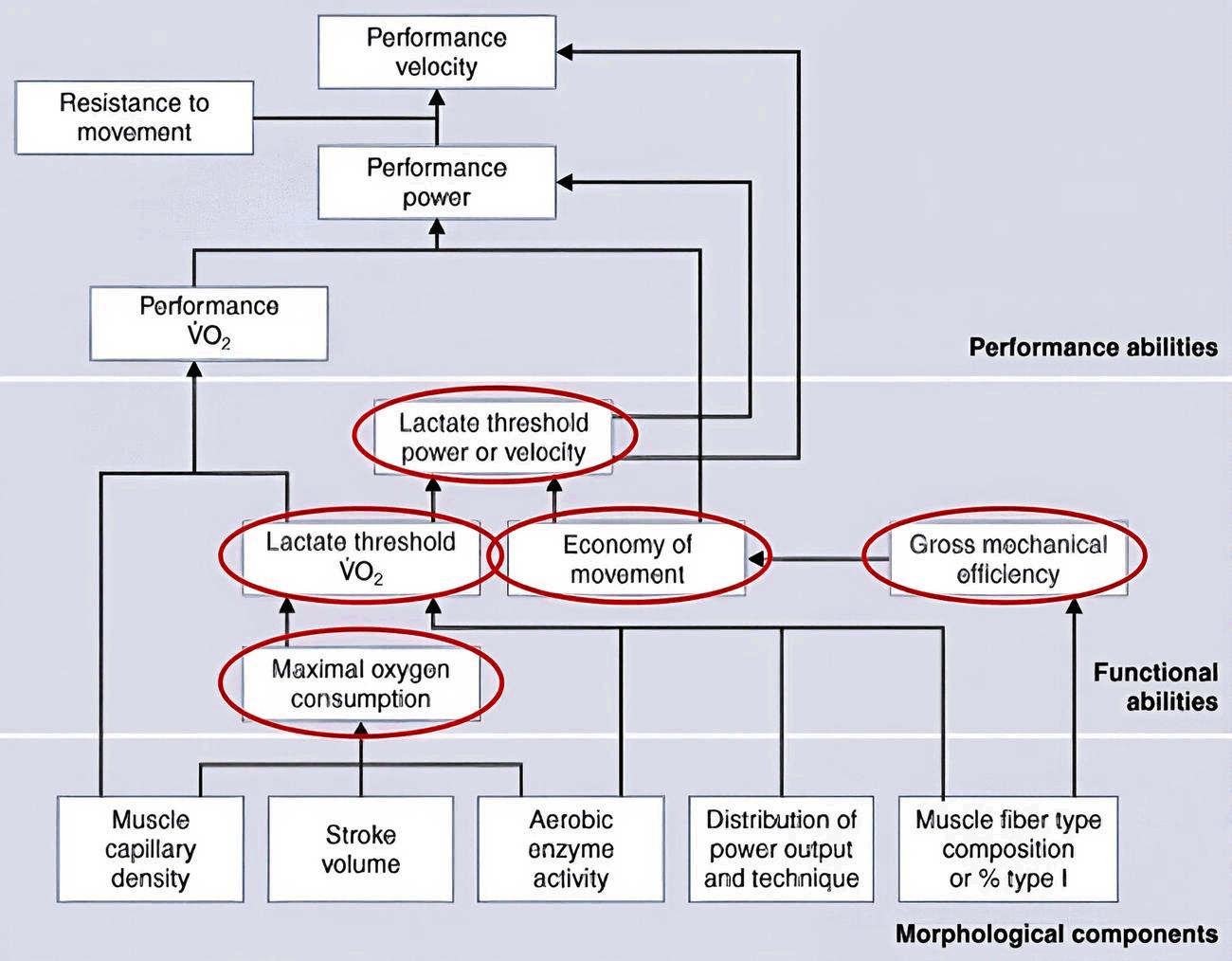

For example, let’s expand the simple physiological model from the above:

We can see that we can go really deep into the analysis of rate limiters. The question is “how deep” and what test to select? I have couple of heuristics I am using in this regard.

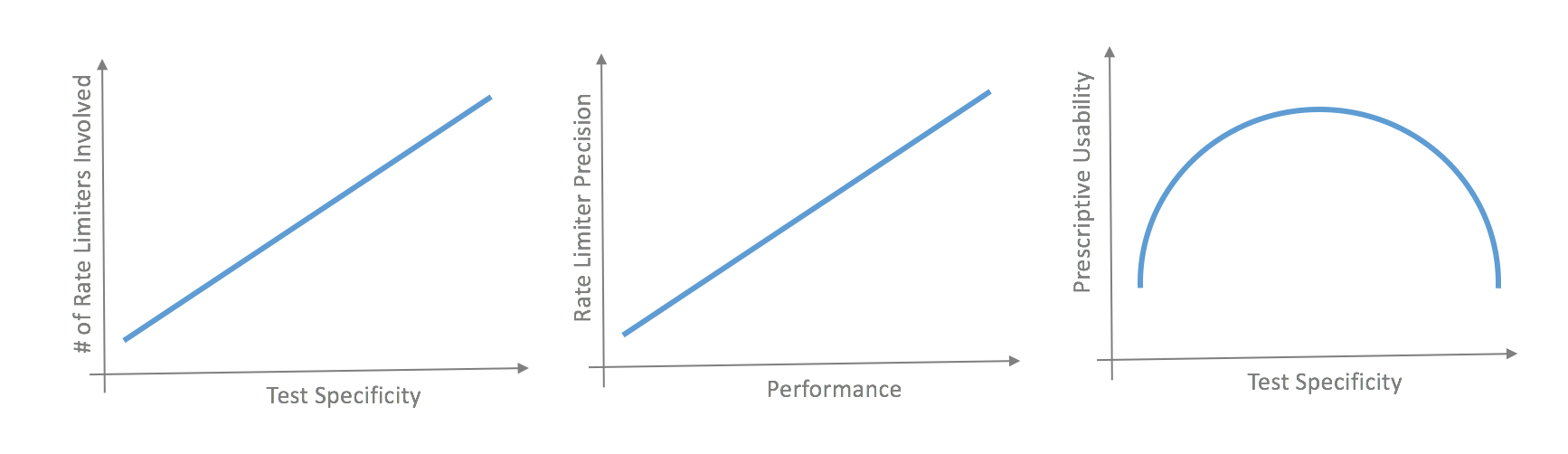

- The more “specific” the test is, the more complexity we introduce in identifying the potential limiters

- The more “general” and “laboratory” test is, the more we are clear about the limiters, but we lose practical application of such test (see the next point)

- The lower the athlete, the less we need to go through the rabbit hole, and vice versa

So the conundrum that needs to be answered is “What would be the test I can use that is a sport and training specific enough, but also simple enough to give me just enough information that can help me plan the training for these levels of athletes?”

For example, if I choose the YoYo test, I might not be able to use the data to plan the training (see the next point), I might not know whether the performance is limited due to poor mechanics, poor aerobic capacity, poor anaerobic reserve, poor intra-test recovery and so forth. If I choose the 1500m time-trial, I might not know how the athlete might perform in COD conditioning drills, whether his performance was limited by bad pacing, and so forth. Besides, the test is not “specific” enough to “include” specific limiters and the players and staff might complain. In the Beep test (both straight line and ramp protocols) if the stages are too short and the increase in velocity is too quick it might give us different importance of different limiters (for example athlete with smaller aerobic capacity but with bigger anaerobic nitro might reach higher speeds because stages are not long enough for him to exhaust his nitro and we might get the feeling that he is aerobically fit and he is not). The more you want to be precise with identifying the limiter, the more tests you need to use. Interesting paper dealing with some of those issues is by Haydar et al.

So the decision making in selecting the test must involve what we need to get the job done, and what gives us just enough information to make better training decisions. This will change as the level of the athletes changes and probably if the implementation constraints change as well (how much time we have for testing, how frequent, how much room for interventions do we have and so forth). I believe this is similar to Occam’s Razor and this decision making principle can be applied here as well.

Being more prescriptive (what you need to get the job done)

Having a clue what we need to be working on and what might be limiting the performance is of utmost importance, but we also need to know how to plan/program the training to improve those. As you might expected, here lies the danger as well.

Responses