The Theory & The Reality of using GPS in Sports

I’ve always been a bit of a tech head and fascinated with technology, long before I got into sports. Makes sense considering my initial career path was as a games developer before the path I neded up choosing. Even during my time at a Premier League football club, I tried valiantly to get HRV incorporated, first with KubiosHRV (that probably didn’t help my campaign) to BioforceHRV, Omegawave and iThlete. It’s also during this time I learnt the importance of product that works “in the trenches”.

The Trenches

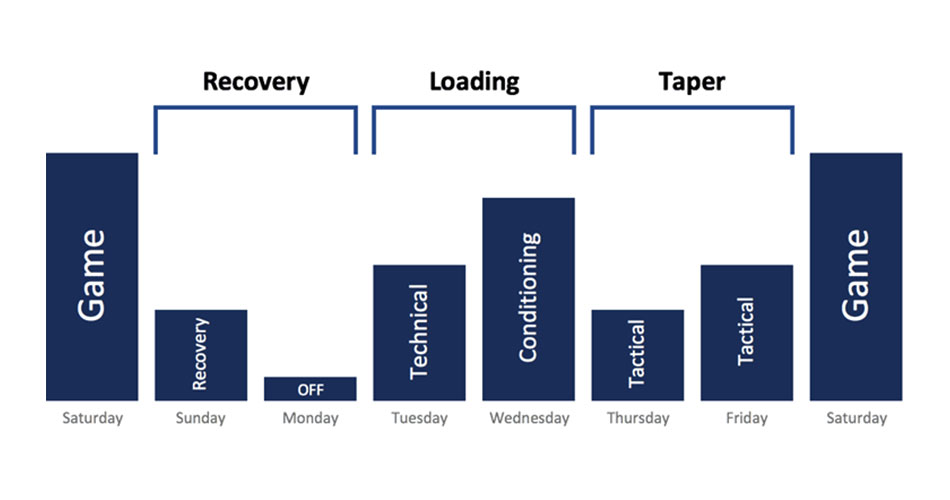

Much like a piece of equipment used during World War 1, it was well and good having a great and well-engineered piece of kit at war, but if you couldn’t use it in the day-to-day dredge of being in the trenches, the equipment would get discarded. I’m reminded of an in-house presentation by one of the earliest sleep-trackers that was trying to make its way into professional football. One of their selling points was how the coaches could assess a player’s sleep and make a decision on whether they would play a game if they didn’t get enough sleep. I quietly turned round to a colleague next to me, and we both gave each other a “good luck with that” smirk. Was I opposed to sleep monitoring? Of course not, as I sports scientist (or is that ex sports scientist now?), such data could be pivotal. But I’d worked in the trenches long enough to know what may sound great in theory, doesn’t always work in reality. If you really think a player is going to adhere to a piece of tech, because it will tell the manager he hasn’t slept well due to a late night in the week, you haven’t worked a day in the life for actual athletes (well, footballers anyway). When you’re in the trenches, you know the line between theory and application; between knowing if/when/how your perfect periodised model based on copious amounts of studying Supertraining and 12-week course on learning how to teach the perfect snatch will work in a team setting, between knowing as a soldier you probably should have a Jerri can full of water nearby, but realistically, your flask around your belt will be your only source of water for a while.

It’s that experience of being in the trenches that brings me to GPS. First thing is first, chances are if you’re reading this, you at least know the purpose and use of GPS in sports, even if you haven’t had a chance to use it. Second, as from this moment on, I will never refer to it as “GPS”. Never liked it, but of course I understand its popularity compared to “GNSS” (Global Navigation Satellite System, rather than the American-based “Global Positioning System). However my dislike is due to the increase in accelerometer data importance and other motion-sensor data becoming almost as equally important as the navigation data. So what’s the best term? Personally, I prefer the official FIFA terminology of EPTS (Electronic Performance & Tracking Systems). I mean, who’s to say we’ll even be using GNSS in 10 years’ time? Better to use a more generic term.

In the last few years, the popularity of EPTSs has increased substantially. As the money flowing into sports (read: Football) increases along with product price decreasing, its use has spread initially from the domain of AFL and the elite of football, now to the lower leagues of team sports. Which is great of course. Systems built for the rich can now be used by the poor (figuratively speaking of course).

But therein lies my first “in the trenches” problem.

The Common Reality

One particular issue I’ve always found with many of the current systems is the lack of adjustment for what I deem the “common reality” of sports scientists. I mean, it’s wonderful to work for a top club, with multiples of staff dedicated to sifting through the 300+ different metrics they have and analysing the data. Having a small team of GPS Analysts, a handful of sports scientists plus a few more performance analysts, all dedicated to analysing game and training session is the dream, isn’t it?. That’s without mentioning the 3-5 S&C coaches, nutritionists, etc. The ideal set up.

But that’s not the common reality now, is it? That’s a reality that I’d say less than 5% actually get to experience. Most Sports Scientist trenches experience involves a team of just them, a physio and maybe a part-time intern or two, if you’re lucky. That’s it. When using the current systems, you get a sense of products made in a vacuum of which the normal working conditions of 95% of sports scientist seldom experience – if ever.

As a long time gamer, I remember the golden generation of the SEGA vs Nintendo days, where each new console release was trumpeted with proclamation of “look how many bits our console CPU has?” 8, 16, 32, 64, 128-bit (ah, my beloved Dreamcast, which wasn’t even really 128-bit), the more bits the better, was the message. Current systems now spread a similar message; more sampling rate (note: a recent study revealed not much difference in accuracy beyond 10Hz), more metrics, MORE DATA. Data is fine when you have the man power to handle it, but when you need to make sense of that data quickly, when it’s just you and an intern and no dedicated GPS analyst? What do you do? What good is 300 metrics, when all you really need is 10 (and you’d be lucky if the coaches will pay attention to more than 5)? What good is spending 3 hours in the office making a pretty report, if by the time it’s made, the coach has either left the building, or decided already what they’re going to do for the next session or game? Did you genuinely influence their thought process and outcome? If not, then what’s the point? What are we doing here?

Speaking of making sense of data, there’s another big issue that one tends to experience when working in the trenches…

The Data Elephant in the Room

As a community, we’ve come a long way from 7-10 years ago, when such systems first entered the scenes. We’re getting better and better in terms of articulating the data to the coaches, the gatekeepers. A big influence in our software design and data visualization during the yearly days was David Tenney of Seattle Sounders, who made it clear to us that simply presenting loading wasn’t enough, but coaches needed to know where you were loading the body for greater buy-in.

In regards to the use of EPTSs, the main goal of every club using such systems is to create an individual profile of all their athletes/players; what they’re standard base performance data is, what’s their normal distance covered, at what point of loading does injury occur, what can they normally handle, risk, etc. Essentially, sports scientist must employ a longitudal method of data collecting over, I’d say at least 3 years, before one can truly start making sense of the data. In theory, that seems fine and dandy…..in theory.

Reality is often an ugly beast though, isn’t it? When you get into the trenches, you realize such perfect conditions rarely exist. To be frank, a lot can happen in 3 years. In 3 years, probably half of those players you’re profiling will be gone, replaced with new players to profile. They’ll be gone too in a season or two, I bet. You’ll probably have data “black spots” of players who have been out due to long-term injuries too. Heck you’ll be lucky if you’re in the job for 3 years these days. At the bigger clubs, many value stability outside the coaching team, and set up accordingly, giving continuity across departments to allow for stable data collection and profiling. And that’s how it should be, but we’re certainly not the ones to force such an industry change. We merely accept our working environment.

What does that condition mean? Well it means the central goal, the very core reason and value and purpose of EPTSs, to profile players can be a bit of a red herring. As the industry-known Craig Duncan once tweeted, “many sports scientist spend their time collecting the dots, rather than connecting them”. Essentially many are stuck in a perpetual “data collection flux”, like some kind of data collecting version of Jumanji (which actually sounds kinda fun).

Breaking the Cost and Research Barrier

As you’ve probably figured by now, I have been alluding to an alternative system that we’re finally about to launch, one born out of “the trenches”, but I really don’t want to talk about what we have quite yet, I’ll save that till the end. I could talk about other trenches problems; from data visualization to the idea of sports scientists being away from the coaches on the side line on a laptop, rather than by their side, acting as the “King’s hand” to the coaches (apologies, I’ve recently just finished a 50-hour marathon of Game of Thrones in 8 days, so I’m shoe-horning references into everything).

But there is one more I do want to discuss; cost and research. I say cost and research because the two are intrinsically linked.

Within the sports science community, there is a general resistance to the use of GPS from an academic perspective. There’s still plenty of questions in regards to reliability and validity, all of them valid. For instance, there’s still questions in regards to their use in certain types of stadiums (I’ve heard talk of having access to more satellites will solve this issue. It won’t. Because I have not heard of single high-precision GNSS module that has been made in the last 7 years that only uses the American GPS system. It means your system is already using all the systems out there, bar GALLILEO), all fair. Questions about the validity of certain algorithms, methods, etc.

There are critics of EPTS, and who can blame them? Imagine paying 30 grand for a brand new car, get sold on the horsepower, but yet told you’re not allowed to have a look at the engine? With the amount of papers using such systems few and far in-between, the industry is essentially chasing shadows. By the time you’ve even published a paper questioning an algorithm, a software update has already been sent with a “new and improved” metric. Should you give up, or should you just keep chasing pavements? Even if it leads nowhere?

And I guess this is where I make my call to arms. If you’re reading this, we’ve either launched or about to launch our kickstarter campaign for our next-generation EPTS; The Precision-SSS. From a business perspective, I understand the idea of keeping custom algorithms closed; the sports science community can be a scary bunch, and can be swift to tear down. Best to protect your product then. But I believe such criticism isn’t just from being academics, but resistance against the idea of paying so much for a system that still revels in working in a closed-platform. We believe the only way to push research forward is to make things open and affordable. It means opening ourselves to criticism, sure, but at least we can say “hey, you have the system now. What research ideas do you have? Maybe you can find the answers.”

At Precision Sports Technologies, we believe we’re still at the early stages of identifying the best practise and application of EPTSs, and taking things deeper and deeper into a walled garden isn’t the way forward. Making systems less and less accessible isn’t the answer, with the point now where the latest systems don’t even give access to your own raw data, forcing the use of a custom software. This is sports science, not the smartphone technology, and going the way of Apple isn’t the answer. If you go deeper into the rabbit hole, you’re much less likely to find light. Instead we need to widen accessibility; put EPTS into the hands of as many people as possible, from researchers to just data enthusiasts. We need to realize and cater to the 95%, and not just the 5%. And lastly, we need to remember that sports science, research and technology isn’t just for team sports, but for all sports, from football to athletics, to hockey to boxing. We’ve only just touched the surface of what EPTS can do for all sports, there’s still plenty of work to be done.

Responses